Are you getting blocked by Cloudflare while scraping with Python? You can bypass it using Cloudscraper.

In this web scraping tutorial, you'll learn how to bypass Cloudflare using Cloudscraper. We'll also discuss its features and common errors you may encounter and how to fix them.

What Is Cloudscraper?

Cloudscraper is a Python web scraping library built exclusively for retrieving data from Cloudflare-protected websites. Its methods work similarly to the Requests library, but it features patches to mimic parts of popular browsers like Chrome and Firefox.

Cloudscraper's simplicity, potential to bypass Cloudflare's security, and compatibility with parsers like BeautifulSoup make it a valuable data extraction tool.

Cloudscraper aims to help you bypass Cloudflare's "I'm Under Attack Mode" interstitial pages like the one below:

How Does Cloudscraper Work?

Cloudscraper spoofs part of a regular browser to bypass Cloudflare's protections. It achieves this by using browser-like headers, simulating a browser's behavior in handling SSL/TLS connections, and solving JavaScript challenges.

This behavior allows your scraper to appear as a natural user, automatically handling Cloudflare's JavaScript-based security measures without manually deobfuscating Cloudflare's JavaScript.

Your Starting Point: Getting Blocked With Python Requests

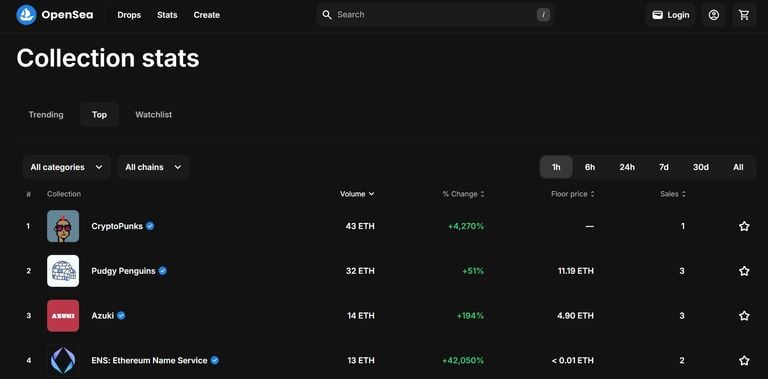

Before we get to the solution, let's assess the problem of Cloudflare blocking your Python Requests scraper. As proof, we tried to scrape OpenSea's NFT Collection Stats, a Cloudflare-protected web page, with Python's Requests.

We sent an HTTP request to access the target website:

# pip3 install requests

import requests

# send a request

response = requests.get("https://opensea.io/rankings")

# get the response status code and text

print("The status code is ", response.status_code)

print(response.text)

Here's what we got, showing that Requests got blocked:

The status code is 403

<!DOCTYPE html>

<html lang="en-US">

<head>

<title>Access denied</title>

<!-- ... -->

</head>

<body>

<!-- ... -->

</body>

</html>

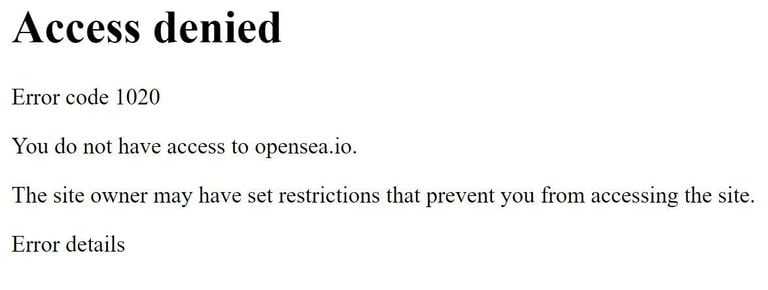

The result above shows an error 403 status code. Plus, the Cloudflare system redirected us to an "Access denied" page instead of OpenSea's Collection stats page. This issue is common in web scraping, as explored in the 403 web scraping error article.

To get a clearer understanding, we saved the HTML response locally so we could view it in a browser. Here's how it looks:

The error happened because Cloudflare detected our request as bot-like and blocked it. To bypass this Cloudflare error page, you must appear as human as possible. Cloudscraper can help you do this to some extent. We'll explain more in the next section.

How to Bypass Cloudflare Using Cloudscraper in Python

Let's review the steps of using Python Cloudscraper, from requesting a target website to parsing its HTML to extract data.

Cloudscraper is no longer actively maintained and does not keep up with recent Cloudflare updates. It might not bypass Cloudflare in all cases as expected.

1. Install Cloudscraper

First, you'll need Python 3. Keep in mind that some systems have it pre-installed.

After that, install Cloudscraper and BeautifulSoup (to parse the returned HTML response):

pip3 install cloudscraper beautifulsoup4

Now, let's apply the installed libraries.

2. Use Cloudscraper in Python

A lot of work goes into bypassing Cloudflare protection with Python. However, with Cloudscraper, you don't need to worry about what goes on behind the scenes. Instead, you can call the scraper function and wait a few seconds to access the target website.

Here's how to do it.

- Import Cloudscraper and BeautifulSoup:

# pip3 install cloudscraper beautifulsoup4

from bs4 import BeautifulSoup

import cloudscraper

- Create a Cloudscraper instance with the browser option and define your target website:

# ...

# create a cloudscraper instance

scraper = cloudscraper.create_scraper(

browser={

"browser": "chrome",

"platform": "windows",

},

)

# specify the target URL

url = "https://opensea.io/rankings"

- Access the website to retrieve its HTML content:

# ...

# request the target website

response = scraper.get(url)

# get the response status code

print(f"The status code is {response.status_code}")

3. Scraping and Parsing a Website

After retrieving the website's HTML response, the next step is to parse it with BeautifulSoup and extract specific data.

To do so, parse the HTML content with BeautifulSoup and use the select_one method to get the page description:

# ...

# parse the returned HTML

soup = BeautifulSoup(response.text, "html.parser")

# get the description element

page_description = soup.select_one(".font-semibold.text-display-md.leading-display-md")

# print the description text

print(page_description.text)

Combine all the snippets, and you'll get the following final code:

# pip3 install cloudscraper beautifulsoup4

from bs4 import BeautifulSoup

import cloudscraper

# create a cloudscraper instance

scraper = cloudscraper.create_scraper(

browser={

"browser": "chrome",

"platform": "windows",

},

)

# specify the target URL

url = "https://opensea.io/rankings"

# request the target website

response = scraper.get(url)

# get the response status code

print(f"The status code is {response.status_code}")

# parse the returned HTML

soup = BeautifulSoup(response.text, "html.parser")

# get the description element

page_description = soup.select_one(".font-semibold.text-display-md.leading-display-md")

# print the description text

print(page_description.text)

The code outputs the response status code and page description, as shown:

The status code is 200

Collection stats

Great job! You've successfully bypassed your first Cloudflare protection.

Cloudscraper Features

Cloudscraper allows you to add more functionalities to your web scraper to mimic natural user behavior. These include the User Agent, cookies, CAPTCHA-solving services, etc.

Let's look at a few in a bit more detail.

Cloudscraper Proxy

Proxies are essential for visiting a website from multiple IP addresses and increasing your anonymity to avoid IP bans or geo-restriction, which can result in a Cloudscraper 403 Cloudflare error.

To set up a Cloudscraper proxy, you only need to include the proxies attribute in your request, just like you would using Python's Requests. Below's an example:

# pip3 install cloudscraper beautifulsoup4

from bs4 import BeautifulSoup

import cloudscraper

# create a cloudscraper instance

scraper = cloudscraper.create_scraper(

browser={

"browser": "chrome",

"platform": "windows",

}

)

# specify proxies

proxy = {

"http": "http://<PROXY_IP_ADDRESS>:<PROXY_PORT>",

"https": "https://<PROXY_IP_ADDRESS>:<PROXY_PORT>",

}

# specify the target URL

url = "https://opensea.io/rankings"

# request the target website with the proxy address

response = scraper.get(url, proxies=proxy)

# ... your scraping logic

Keep in mind that free proxies are unreliable due to their short lifespan. Ensure you use premium proxies for the best result.

To learn more, check out our article on the best web scraping proxies and our detailed guide on adding proxies to Cloudscraper.

Get Around CAPTCHA With Cloudscraper

Cloudscraper also supports integrations with third-party CAPTCHA solvers, including 2Captcha, Anti Captcha, Capsolver, CapMonster Cloud, Death By Captcha, and 9kw.

To create a Cloudscraper CAPTCHA solver, pass the captcha dictionary as a Cloudscraper instance argument with two keys: your provider and authorization credentials. Here's an example using 2Captcha:

# pip3 install cloudscraper beautifulsoup4

# ...

# create a cloudscraper instance

scraper = cloudscraper.create_scraper(

# ...,

captcha={

"provider": "2captcha",

"api_key": "<YOUR_2Captcha_API_KEY>",

},

)

# specify the target URL

url = "https://opensea.io/rankings"

# request the target website with the proxy address

response = scraper.get(url)

# ... your scraping logic

The above code will use 2Captcha's service to solve any CAPTCHA encountered during the request.

Cloudscraper Headers: User Agent

Cloudscraper lets you specify which browser and device type you want to emulate. To do that, pass the browser and platform attributes as arguments to the create_scraper() method.

For instance, our previous scraper mimicked a Chrome browser on a Windows operating system. We've set an extra desktop parameter to True in the example below to use the browser in desktop mode:

# pip3 install cloudscraper beautifulsoup4

# ...

# create a cloudscraper instance

scraper = cloudscraper.create_scraper(

browser={

"browser": "chrome",

"platform": "windows",

"desktop": True,

},

)

# specify the target URL

url = "https://opensea.io/rankings"

# request the target website

response = scraper.get(url)

# ... your scraping logic

Cloudscraper Sessions

When you visit a Cloudflare-protected website with Cloudscraper using Python, it sleeps for 5 seconds by default to solve JavaScript challenges under the hood. After that, you can use the existing Cloudflare sessions to keep scraping the target website.

However, Cloudscraper can borrow the Requests Session object to maintain a session. To do that, create a session with the Requests library and pass it as an argument to the create_scraper() function:

# pip3 install cloudscraper beautifulsoup4 requests

# ...

import requests

# create a Requests session

session = requests.Session()

# create a cloudscraper instance and add the Requests session

scraper = cloudscraper.create_scraper(

# ...,

sess=session,

)

The above scraper now uses the Requests session.

Cloudscraper itself handles sessions internally. One downside of borrowing the Requests session is that it may reduce Cloudscraper's default browser spoofing techniques, resulting in potential blocking.

Cloudscraper Non-Default Features

Cloudscraper has many non-default features you can pass as an argument to built-in functions, such as create_scraper(), get_tokens(), and get_cookie_string().

We've already touched on some of these non-default features. Here are some more examples:

- Browser/User Agent filtering.

- Cookies.

- CAPTCHA.

- Delays.

- JavaScript engines and interpreters.

- Platform.

Say you want to bypass a Cloudflare JavaScript challenge and wait for 10 seconds while appearing as a Chrome User Agent on Windows. You'll need a JavaScript engine and some of the following parameters:

# ...

# create a cloudscraper instance

scraper = cloudscraper.create_scraper(

interpreter="nodejs",

delay=10,

browser={

"browser": "chrome",

"platform": "windows",

"desktop": True,

},

captcha={

"provider": "2captcha",

"api_key": "you_2captcha_api_key",

},

)

The mobile and desktop parameters are "True" by default, so you must turn one off if you want only the other.

Cloudscraper also has a list of supported JavaScript engines and third-party CAPTCHA solvers. The Cloudscraper PyPI documentation provides more details.

Other Features

Other Cloudscraper features include debug, allow_brotli, and cryptography. For more details, check out its documentation.

Common Errors

Below are some common errors you may encounter when scraping that might result in your Cloudscraper not working.

No Module Named Cloudscraper

Another common error is getting a "No module named 'cloudscraper'" output in the console. It indicates that the Python interpreter can't find the Cloudscraper module, even though you've installed it.

A reason for the "No module named 'cloudscraper'" is you might be in the wrong Python virtual environment or have switched to another Python version where Cloudscraper isn't installed.

Here's how to solve the No module named cloudscraper error in Python.

Cloudscraper Module Can't Be Loaded in Python

The log _cloudflare plugin: cloudscraper module can't be loaded in Python indicates an issue with the Python environment. First, verify that the module is installed in the correct Python virtual environment.

Use the following command to verify that Cloudscraper is available in the current virtual environment:

pip3 show cloudscraper

If running Cloudscraper on a server, such as Nginx, ensure you point the server to the Python virtual environment path where you've installed Cloudscraper.

Cloudscraper 403 Forbidden

A Cloudscraper 403 forbidden error is common when scraping with the library. It means the server understands your request but won't honor it. The Cloudflare 403 error happens when Cloudflare denies your Cloudscraper script access to a target website.

Check out our article on bypassing the Cloudscraper 403 error for how to resolve this issue.

Can Cloudscraper Bypass Newer Cloudflare Versions?

Cloudflare frequently updates its bot protection techniques, so let's see how Cloudscraper fights against its newer versions.

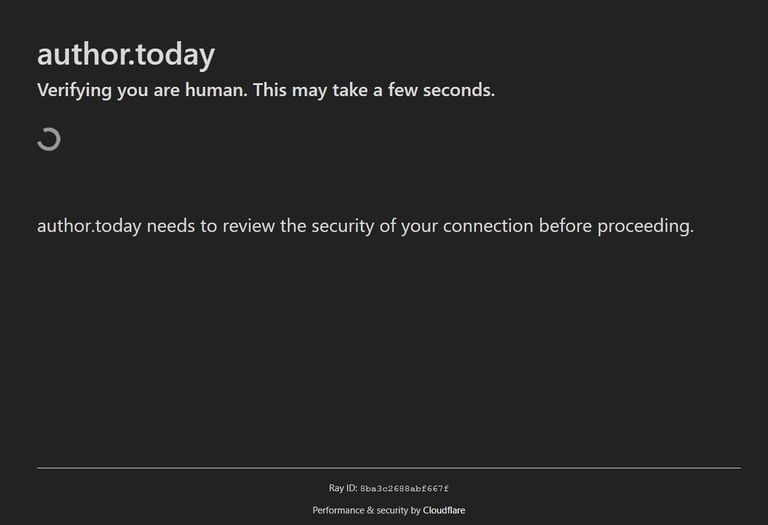

For this example, we'll try to scrape Author.Today as a demo, a website that uses the recent Cloudflare version.

When you visit this website on a browser, it automatically redirects you to the Cloudflare waiting room where it checks if our connection is secure:

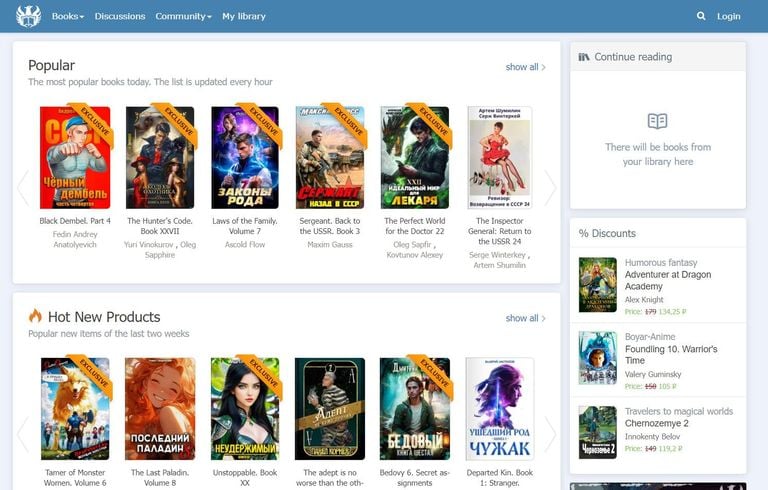

Since you're sending this request from an actual browser, Cloudflare will accept your connection and redirect you to the original home page:

Now, try accessing this website's content with Cloudscraper:

# pip3 install cloudscraper

import cloudscraper

# create a cloudscraper instance

scraper = cloudscraper.create_scraper(

browser={

"browser": "chrome",

"platform": "windows",

},

)

# specify the target URL

url = "https://author.today/"

# request the target website

response = scraper.get(url)

# get the response status code

print(f"The status code is {response.status_code}")

# print the response HTML

print(response.text)

The above code outputs the following, indicating that Cloudscraper got blocked by Cloudflare:

The status code is 403

<!DOCTYPE html>

<html lang="en-US">

<head>

<title>Just a moment...</title>

<!-- ... -->

</head>

<body>

<!-- ... -->

</body>

</html>

So, how can you solve this problem? Cloudflare's bot detection techniques quickly adapt to open-source bypassing tools, and it detects them easily. The only way to go past them is by imitating natural user behavior. You can achieve that with the help of headless browsers like Selenium or Playwright, alongside valid and proper HTTP headers.

Moreover, if you're facing challenges with Cloudflare's bot detection while using Scrapy, integrating with Cloudscraper can indeed be an effective solution.

However, these approaches also have their limitations and don't always work.

Fortunately, there's a way out! The next section discusses an all-in-one alternative.

What Is The Best Cloudscraper Alternative?

If you've encountered trouble with newer Cloudflare versions, then it's time to switch the tool!

ZenRows is a powerful web scraping API that helps with bypassing Cloudflare, regardless of its frequent updates. It features auto-rotating premium proxies, optimized request headers, geo-targeting, anti-CAPTCHA and anti-bot auto-bypass, and more.

ZenRows also has headless browser features, allowing you to execute human interactions and scrape dynamically rendered content easily. You can also access its dedicated residential proxy service under a single price cap.

Let's try using ZenRows to scrape the previous target website that blocked Cloudscraper!

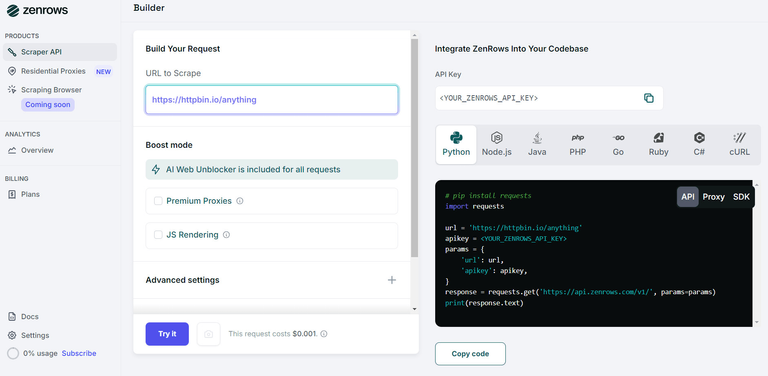

Sign up to open the ZenRows Request Builder. Paste the target URL in the link box, and activate Premium Proxies and JS Rendering. Choose Python as your programming language, and select the API connection mode. Copy and paste the generated code into your Python scraper script.

Here's what the generated code looks like:

# pip install requests

import requests

url = "https://author.today/"

apikey = "<YOUR_ZENROWS_API_KEY>"

params = {

"url": url,

"apikey": apikey,

"js_render": "true",

"premium_proxy": "true",

}

response = requests.get("https://api.zenrows.com/v1/", params=params)

print(response.text)

The above code bypasses Cloudflare and scrapes the protected website's HTML. Here's an extract from the output:

<html lang="ru">

<head>

<meta http-equiv="content-type" content="text/html; charset=utf-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<!-- ... -->

<link rel="apple-touch-icon" sizes="57x57" href="https://author.today/...">

<link rel="apple-touch-icon" sizes="60x60" href="https://author.today/...">

<link rel="apple-touch-icon" sizes="72x72" href="https://author.today/...">

<link rel="apple-touch-icon" sizes="76x76" href="https://author.today/...">

<!-- ... -->

</head>

<body>

<!-- ... -->

</body>

</html>

Yay! 🥳 While we saw Cloudscraper fail against newer Cloudflare versions, ZenRows succeeded.

With its intuitive API, you can easily bypass the anti-bot protection and extract the information you need from any website. Here are more Cloudscraper alternatives.

Conclusion

You've seen how to use Cloudscraper in Python, including its features, how it works, and solutions to common errors.

However, as mentioned, using Cloudscraper in JavaScript, or for this article, Python is helpful with older Cloudflare versions, yet a different solution, such as ZenRows, needs to be implemented to bypass newer Cloudflare versions. ZenRows also helps you save time and reduce costs, as it's a web scraping API designed to win over all sorts of anti-scraping protections regardless of frequent security updates.

Try ZenRows for free now without a credit card!