Ever had that frustrating moment when your web scraper suddenly stops working because you've been blocked?

You can usually get around these blocks by using proxies with Selenium. In this guide, I'll walk you through setting up proxies in your C# Selenium projects so you can keep your scraping operations running smoothly.

How to Use a Proxy in Selenium C#?

There are two common approaches you can employ to use a proxy in Selenium C#. One is using the AddArgument() method to specify your proxy details within the browser options.

The second approach involves defining your proxy settings using a proxy object before assigning it to the Webdriver options.

Before we dive into the step-by-step, here's a basic Selenium script to which you can add proxy configurations.

using OpenQA.Selenium;

using OpenQA.Selenium.Chrome;

using System;

class Program

{

static void Main()

{

// Set up the ChromeDriver instance

IWebDriver driver = new ChromeDriver();

// Navigate to target website

driver.Navigate().GoToUrl("https://ident.me");

// Add a wait for three seconds

Thread.Sleep(3000);

// Select the HTML body

IWebElement pageElement = driver.FindElement(By.TagName("body"));

// Get and print the text content of the page

string pageContent = pageElement.Text;

Console.WriteLine(pageContent);

// Close the browser

driver.Quit();

}

}

This code creates a Chromedriver instance, navigates to ident, a website that displays the web client's IP address as HTML content and prints the page content.

If you'd like a web scraping refresher, check out our C# web scraping guide.

Step 1: Use a Proxy in an HTTP Request

Start by creating a new ChromeOptions instance. Then, using options.AddArgument, specify your proxy details within the browser options. Remark: We grabbed a free proxy from FreeProxyList.

ChromeOptions options = new ChromeOptions();

// Set up the ChromeDriver instance with proxy configuration using AddArgument

options.AddArgument("--proxy-server=http://71.86.129.131:8080");

To verify it works, let's add the proxy configuration above to the basic script we created earlier. You'll have the following complete code.

using OpenQA.Selenium;

using OpenQA.Selenium.Chrome;

using System;

class Program

{

static void Main()

{

ChromeOptions options = new ChromeOptions();

// Set up the ChromeDriver instance with proxy configuration using AddArgument

options.AddArgument("--proxy-server=http://71.86.129.131:8080");

// Set up the ChromeDriver instance

IWebDriver driver = new ChromeDriver(options);

// Navigate to target website

driver.Navigate().GoToUrl("http://ident.me");

// Add a wait for three seconds

Thread.Sleep(3000);

// Select the HTML body

IWebElement pageElement = driver.FindElement(By.TagName("body"));

// Get and print the text content of the page

string pageContent = pageElement.Text;

Console.WriteLine(pageContent);

// Close the browser

driver.Quit();

}

}

Run it, and your response should be your proxy's IP address.

71.86.129.131

Awesome, you've configured your first Selenium C# proxy.

However, while we used a free proxy in the example above, they're generally unreliable. In real-world use cases, you'll need premium proxies, which often require additional configuration. Let's see how to implement such proxies in Selenium C#.

Proxy Authentication with Selenium C#

Premium proxy providers often require credentials like username and password for security and access control.

Unfortunately, Selenium does not provide built-in authentication support. However, it works with BiDi APIs to provide the NetworkAuthenticationHandler class that allows you to supply authentication information for network requests.

This class has two properties; Credentials and UriMatcher. Setting the Credentials property allows you to provide the necessary username and password, while the UriMatcher property specifies the conditions under which these credentials should be used for authentication.

Therefore, to authenticate your Selenium C# proxy, set up the handler with your proxy credentials and add it to the network request using the AddAuthenticationHandler() method.

// Create the NetworkAuthenticationHandler with credentials

var networkAuthenticationHandler = new NetworkAuthenticationHandler

{

UriMatcher = uri => uri.Host.Contains("ident.me"), // only apply for the specific host

Credentials = new NetworkCredential("<YOUR_USERNAME>", "<YOUR_PASSWORD>")

};

// Add the authentication credentials to the network request

var networkInterceptor = driver.Manage().Network;

networkInterceptor.AddAuthenticationHandler(networkAuthenticationHandler);

So, if the proxy in step 2 were premium, you can authenticate it by updating the full code with the above code snippet. Your new code should now look like this.

using OpenQA.Selenium;

using OpenQA.Selenium.Chrome;

using System;

class Program

{

static void Main()

{

ChromeOptions options = new ChromeOptions();

// Set up the ChromeDriver instance with proxy configuration using AddArgument

options.AddArgument("--proxy-server=http://71.86.129.131:8080");

// Set up the ChromeDriver instance

IWebDriver driver = new ChromeDriver(options);

// Create the NetworkAuthenticationHandler with credentials

var networkAuthenticationHandler = new NetworkAuthenticationHandler

{

UriMatcher = uri => uri.Host.Contains("ident.me"), // Only apply for the specific host

Credentials = new PasswordCredentials("<YOUR_USERNAME>", "<YOUR_PASSWORD>")

};

// Add the authentication credentials to the network request

var networkInterceptor = driver.Manage().Network;

networkInterceptor.AddAuthenticationHandler(networkAuthenticationHandler);

// Navigate to target website

driver.Navigate().GoToUrl("http://ident.me");

// Add a wait for three seconds

Thread.Sleep(3000);

// Select the HTML body

IWebElement pageElement = driver.FindElement(By.TagName("body"));

// Get and print the text content of the page

string pageContent = pageElement.Text;

Console.WriteLine(pageContent);

// Close the browser

driver.Quit();

}

}

Step 2: Implement a Rotating Proxy in Selenium C#

Rotating proxies is vital when making numerous requests to a target server. Websites often impose rate limits and flag such automated requests as suspicious activity. However, by periodically changing IP addresses, you distribute traffic across multiple IPs, and your requests appear to come from different users.

To build a C# proxy rotator in Selenium, first, you need a pool of proxies to choose from for each request. We've grabbed a few from a FreeProxyList.

Start by defining your proxy pool.

using OpenQA.Selenium;

using OpenQA.Selenium.Chrome;

using System;

class Program

{

static void Main()

{

var proxies = new List<string>

{

"http://211.193.1.11:80",

"http://138.68.60.8:8080",

"http://209.13.186.20:80"

// Add more proxy configurations as needed

};

}

}

Next, select a random proxy, create a new ChromeOptions instance, and assign the selected proxy to the browser options using the AddArguments method.

//..

static void Main()

{

//..

// Select a random proxy configuration

var random = new Random();

int randomIndex = random.Next(proxies.Count);

string randomProxy = proxies[randomIndex];

// Create a new ChromeOptions instance

ChromeOptions options = new ChromeOptions();

// Assign proxy to chrome instance using AddArgument

options.AddArgument($"--proxy-server={randomProxy}");

options.AddArgument("headless");

}

Lastly, implement your scraping logic like in the basic script we created earlier. Putting everything together, you should have the following complete code.

using OpenQA.Selenium;

using OpenQA.Selenium.Chrome;

using System;

class Program

{

static void Main()

{

var proxies = new List<string>

{

"http://211.193.1.11:80",

"http://138.68.60.8:8080",

"http://209.13.186.20:80"

// Add more proxy configurations as needed

};

// Select a random proxy configuration

var random = new Random();

int randomIndex = random.Next(proxies.Count);

string randomProxy = proxies[randomIndex];

// Create a new ChromeOptions instance

ChromeOptions options = new ChromeOptions();

// Assign proxy to chrome instance using AddArgument

options.AddArgument($"--proxy-server={randomProxy}");

options.AddArgument("headless");

// Set up the ChromeDriver instance

IWebDriver driver = new ChromeDriver(options);

// Navigate to target website

driver.Navigate().GoToUrl("http://ident.me");

// Add a wait for three seconds

Thread.Sleep(3000);

// Select the HTML body

IWebElement pageElement = driver.FindElement(By.TagName("body"));

// Get and print the text content of the page

string pageContent = pageElement.Text;

Console.WriteLine(pageContent);

// Close the browser

driver.Quit();

}

}

To verify it works, make multiple requests. You should get a different IP address per request. Here are the results for two requests.

211.193.1.11

//..

138.68.60.8

### ```

Awesome! You've built your first Selenium C# proxy rotator.

Now, let's try your proxy rotator in a real-world scenario against a protected website, a G2 Product review page.

For that, replace the target URL in step 2 with https://www.g2.com/products/salesforce-salesforce-sales-cloud/reviews. Run your code, and it'll fail, displaying an error message like the one below.

<!DOCTYPE html>\n

<!--[if lt IE 7]>

</head>\n <body>\n <div.......">\n

<h1 data-translate="block_headline">Sorry, you have been blocked</h1>

<h2 class="cf-subheadline">

<span data-translate="unable_to_access">You are unable to access</span> g2.com/...

</h2>

#....

This is because anti-bot systems easily detect free proxies. We only used them in the above examples to explain the basics. For better results, you need premium proxies. Let's explore those next.

Premium Proxy to Avoid Getting Blocked

Free proxies present major challenges for automated web scraping. Their unstable connections, compromised security, and poor reputation make them unsuitable for professional use. Websites frequently detect and block these free proxies, making them unreliable for consistent data collection.

Premium residential proxies provide a more dependable solution for avoiding detection. Using residential IPs from legitimate sources, premium proxies can effectively simulate real user behavior. With features like automatic IP rotation and geographic targeting, they substantially improve the success rate of web scraping tasks.

ZenRows' Residential Proxies is an industry-leading premium proxy service that provides access to more than 55M+ residential IPs distributed across 185+ countries. It comes equipped with powerful features including dynamic IP rotation, smart proxy selection, and customizable geo-targeting, all supported by enterprise-grade uptime.

Let's integrate ZenRows' Residential Proxies with Selenium in C#.

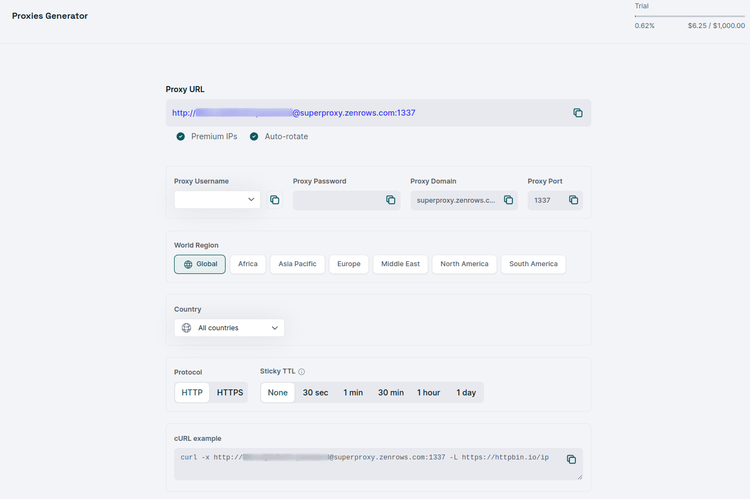

First, sign up and you'll get to the Proxy Generator dashboard. Your proxy credentials will be automatically generated.

Take your proxy credentials (username and password) and replace the placeholders in the following code:

using OpenQA.Selenium;

using OpenQA.Selenium.Chrome;

using System;

class Program

{

static void Main()

{

ChromeOptions options = new ChromeOptions();

// set up the ChromeDriver instance with proxy configuration using AddArgument

options.AddArgument("--proxy-server=http://superproxy.zenrows.com:1337");

// set up the ChromeDriver instance

IWebDriver driver = new ChromeDriver(options);

// create the NetworkAuthenticationHandler with credentials

var networkAuthenticationHandler = new NetworkAuthenticationHandler

{

UriMatcher = uri => uri.Host.Contains("httpbin.io"), // Only apply for the specific host

Credentials = new PasswordCredentials("<ZENROWS_PROXY_USERNAME>", "<ZENROWS_PROXY_PASSWORD>")

};

// add the authentication credentials to the network request

var networkInterceptor = driver.Manage().Network;

networkInterceptor.AddAuthenticationHandler(networkAuthenticationHandler);

// navigate to target website

driver.Navigate().GoToUrl("https://httpbin.io/ip");

// add a wait for three seconds

Thread.Sleep(3000);

// select the HTML body

IWebElement pageElement = driver.FindElement(By.TagName("body"));

// get and print the text content of the page

string pageContent = pageElement.Text;

Console.WriteLine(pageContent);

// Close the browser

driver.Quit();

}

}

Running this code multiple times will show output like this:

# request 1

{

"origin": "45.136.231.85:62104"

}

# request 2

{

"origin": "191.96.78.192:35721"

}

Excellent! The changing IP addresses in the output confirm that your script is successfully routing through ZenRows' residential proxy network. Your C# Selenium script is now equipped with premium proxies that dramatically reduce the likelihood of being blocked during web scraping.

Conclusion

Setting a Selenium C# proxy enables you to route your request through a different IP address. However, too many requests to a target website can result in an IP ban. So, you must rotate proxies for better results.

Instead of wrestling with Selenium and the tedious technicalities, consider ZenRows. Our web scraping API handles everything you need to extract data at scale without blocks. Sign up now to try ZenRows for free.