Do you want to avoid inspecting and tracing element attributes while web scraping? AutoScraper provides pre-trained models and uses text-based keywords to extract content without CSS selectors.

In this tutorial, you'll learn how the AutoScraper library works in Python and how to use it for content extraction.

What Is AutoScraper?

AutoScraper is a Python web scraping library that extracts similar content from different web pages.

After scraping content from an initial web page based on user-defined keywords, AutoScraper uses a built-in pre-trained model to learn the scraping pattern and get similar data from more pages.

However, AutoScraper has some limitations. It doesn't support JavaScript-rendered web pages and can't extract content from paginated websites due to its inefficiency in handling redirects. Another limitation is that the extracted data may be inaccurate since AutoScraper doesn't use specific CSS selectors.

Tutorial: How to Scrape With AutoScraper

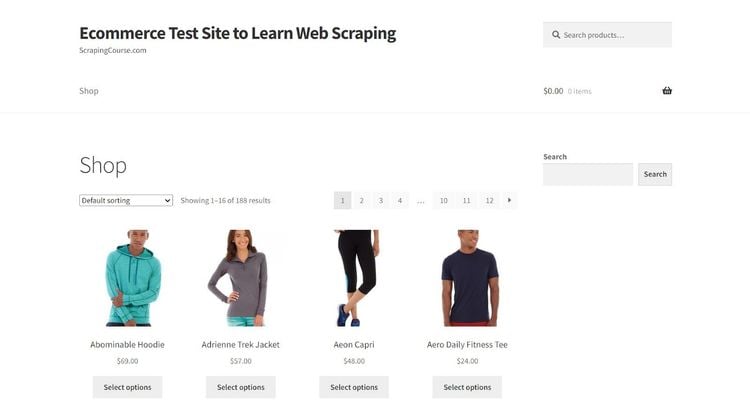

AutoScraper is suitable for scraping targeted content based on keywords. You'll learn to use it by extracting product names and prices from the ScrapingCourse e-commerce demo website.

Here's what the target website looks like:

Let's start with the prerequisites.

Prerequisites

This tutorial uses Python 3.12+ on a Windows operating system. Ensure you've downloaded and installed the latest version from the Python download page. You can develop your scraper using any code editor, but this tutorial uses VS Code.

Start with installing AutoScraper using pip:

pip install autoscraper

Step 1: Provide URL and Sample Data

AutoScraper obtains content by targeting specific keywords on a web page. The first step is to provide the initial URL and the keywords you want to extract from that page. This initial process trains the AutoScraper model and serves as a template for auto-scraping content from more pages.

Let's target the first product's name ("Abominable Hoodie") and price ("$69.00") to see what AutoScraper extracts.

To achieve that, import the AutoScraper library, specify the first product page URL in your code, and create a list of target keywords:

# import the required library

from autoscraper import AutoScraper

# specify the target URL

url = "https://www.scrapingcourse.com/ecommerce/"

# enter the specific keywords to target and retrieve.

Now, you're ready to scrape with AutoScraper!

You'll extract that content in the next section.

Step 2: Create the AutoScraper to Get Your Data

The next step is to create an AutoScraper object and build your scraper using the initial URL and the specified keywords.

The scraper learns the location pattern of the target element that contains those keywords in the DOM. Then, it executes subsequent scraping tasks.

Let's expand the previous code to see how that works:

# ...

# create an AutoScraper object

scraper = AutoScraper()

# build the scraper on an initial URL

result = scraper.build(url, wanted_list)

# print the scraped data

print(result)

Merge the above code with the code from the previous section. Your final code should look like this:

# import the required library

from autoscraper import AutoScraper

# specify the target URL

url = "https://www.scrapingcourse.com/ecommerce/"

# enter the specific keywords to target and retrieve.

wanted_list = ["Abominable Hoodie", "$69.00"]

# create an AutoScraper object

scraper = AutoScraper()

# build the scraper on an initial URL

result = scraper.build(url, wanted_list)

# print the scraped data

print(result)

Execute the script by running the following command:

python scraper.py

The code traces similar content and extracts the names and prices of all products into a list, as shown:

[

'Abominable Hoodie',

'Adrienne Trek Jacket',

# … other product names omitted for brevity

'$69.00',

'$57.00',

# … other product prices omitted for brevity

]

The AutoScraper script now scrapes data based on the specified pattern. Congratulations, you've just built your first trained scraper! Let's use it to extract similar content from another page.

Step 3: Get Similar Results From Different URLs

The previously trained scraper can extract similar content from another web page. In this example, since you scraped product names and prices from a previous URL, AutoScraper will learn from that pattern and target the same content on other URLs.

Let's use the trained scraper on the second and third pages of the same website. It involves using the previous scraper instance to request the new web pages.

Extend the previous snippet with the following code:

# ...

# target similar pattern on the second product page

page_2_result = scraper.get_result_similar("https://www.scrapingcourse.com/ecommerce/page/2/")

# print the scraped data

print(page_2_result)

# target similar pattern on the second product page

page_3_result = scraper.get_result_similar("https://www.scrapingcourse.com/ecommerce/page/3/")

# print the scraped data

print(page_3_result)

Add the above snippet to the previous code to get the following result:

# import the required library

from autoscraper import AutoScraper

# specify the target URL

url = "https://www.scrapingcourse.com/ecommerce/"

# enter the specific keywords to target and retrieve.

wanted_list = ["Abominable Hoodie", "$69.00"]

# create an AutoScraper object

scraper = AutoScraper()

# build the scraper on an initial URL

result = scraper.build(url, wanted_list)

# print the scraped data

print(result)

# target similar pattern on the second product page

page_2_result = scraper.get_result_similar("https://www.scrapingcourse.com/ecommerce/page/2/")

# print the scraped data

print(page_2_result)

# target similar pattern on the third product page

page_3_result = scraper.get_result_similar("https://www.scrapingcourse.com/ecommerce/page/3/")

# print the scraped data

print(page_3_result)

The code outputs all the product names and prices from all the target pages:

[

'Abominable Hoodie',

# ... other product names omitted for brevity

'$69.00',

# ... other product prices omitted for brevity

]

[

'Atlas Fitness Tank',

# ... other product names omitted for brevity

'$18.00',

# ... other product names omitted for brevity

]

[

'Cassius Sparring Tank',

# ... other product names omitted for brevity

'$18.00',

# ... other product names omitted for brevity

]

You now know how to reuse a trained Autoscraper model to extract similar data from several pages. Let's save that model in the next section.

Step 4 (Optional): Save the AutoScraper Model

You can save your model in your project directory and reuse it for another scraper. AutoScraper requires you to specify a file name in a save method.

Add the following line to the previous code to save your scraper model in your project directory. Replace "e-commerce-model" with your preferred file name.

# ...

scraper.save("e-commerce-model")

Combine the above with the previous snippet, and you'll get the following final code:

# import the required library

from autoscraper import AutoScraper

# specify the target URL

url = "https://www.scrapingcourse.com/ecommerce/"

# enter the specific keywords to target and retrieve.

wanted_list = ["Abominable Hoodie", "$69.00"]

# create an AutoScraper object

scraper = AutoScraper()

# build the scraper on an initial URL

result = scraper.build(url, wanted_list, update=True)

# print the scraped data

print(result)

# target similar pattern on the second product page

page_2_result = scraper.get_result_similar("https://www.scrapingcourse.com/ecommerce/page/2/")

# print the scraped data

print(page_2_result)

# target similar pattern on the second product page

page_3_result = scraper.get_result_similar("https://www.scrapingcourse.com/ecommerce/page/3/")

# print the scraped data

print(page_3_result)

scraper.save("e-commerce-model")

Your stored model is ready for reuse!

Step 5 (Optional): Reuse the AutoScraper Model for Another Scraper File

Reusing the previously stored model requires loading its file name using the AutoScraper object. In this example, let's reuse the saved model to scrape content from the fourth product page.

Open another Python file in your project directory and give it a descriptive name (e.g., "scraper2.py"). Import the AutoScraper library and call the saved model:

# import the required library

from autoscraper import AutoScraper

# create an AutoScraper object

scraper = AutoScraper()

# load the saved scraper

scraper.load("e-commerce-model")

# use the loaded model on another page

result = scraper.get_result_similar("https://www.scrapingcourse.com/ecommerce/page/4/")

# show the output

print(result)

The above code scrapes content from the new page based on the trained model:

[

'Deirdre Relaxed-Fit Capri',

'Desiree Fitness Tee',

# ... other product names omitted for brevity

'$63.00',

'$24.00',

# ... other product prices omitted for brevity

]

Bravo! You just reused your stored model in another scraper file.

Avoid Getting Blocked When Scraping With AutoScraper

Websites use different anti-bot detection strategies to prevent automated bots, such as web scrapers, from accessing their content. You'll need to find a way to bypass these detection measures to scrape without getting blocked.

You can bypass blocks by adding proxies to your web scraper to extract data from several pages. A proxy changes your IP address, so the server thinks you're requesting from a different location.

AutoScraper supports proxy implementation. Let's set it up using a free proxy from the Free Proxy List. This tutorial uses HTTPS proxies since they work with secure and insecure websites.

However, that proxy may not work at the time of reading because free proxies are unreliable due to their short lifespan. Feel free to grab a fresh one from the proxy website.

Define your proxy details using the "<PROXY_PROTOCOL>://<PROXY_IP_ADDRESS>:<PROXY_PORT>" pattern. Then, pass it to AutoScraper's build method using the request_args argument:

# import the required library

from autoscraper import AutoScraper

# specify the target URL

url = "https://www.scrapingcourse.com/ecommerce/"

# define your proxy details

proxies = {

"http": "http://168.194.169.108:999",

"https": "https://168.194.169.108:999",

}

# enter the specific keywords to target and retrieve.

wanted_list = ["Abominable Hoodie", "$69.00"]

# create an AutoScraper object

scraper = AutoScraper()

# build the scraper on an initial URL

result = scraper.build(

url,

wanted_list=wanted_list,

request_args=dict(proxies=proxies)

)

# print the scraped data

print(result)

You've just implemented a proxy with the AutoScraper library!

Remember that free proxies don't work for real projects due to their short life span. The best option for production-level scraping scenarios is premium web scraping proxies, which require authentication credentials like passwords and usernames.

However, even premium proxies sometimes turn out insufficient for heavily protected websites, often resulting in Cloudflare error 1015 and other WAF blocks. In these cases, go for a web scraping API, e.g., ZenRows. ZenRows auto-rotates premium proxies, fixes your request headers, and bypasses CAPTCHAs and any other anti-bots at scale with a single API call.

ZenRows also features JavaScript instructions, which allow it to act as a headless browser for scraping dynamic web pages with features like infinite scrolling.

Conclusion

In this article, you've learned how to use the AutoScraper library to scrape the web in Python. You now know how to:

- Train the AutoScraper model by scraping content based on user-defined keywords.

- Use the trained model to extract similar content from several pages.

- Save the AutoScraper model locally.

- Reuse the saved AutoScraper model in another scraper file.

Remember that anti-bot systems prevent scrapers from extracting data. The best way to bypass any anti-bot measure, regardless of its complexity, is to integrate ZenRows with your web scraper and scrape any website without getting blocked. Try ZenRows for free!