Renowned for its user-friendly API and branding as "HTTP for humans", Requests is Python's most popular HTTP client. However, as requirements diversify and applications become complex, the need for a Python Requests alternative that offers specialized functionality or enhanced performance arises.

Let's explore your best options, starting with a quick comparison table that overviews each tool's strengths and best use cases.

| Library | Best For | Popularity | Ease of Use | Speed | Asynchronous Support | Documentation |

|---|---|---|---|---|---|---|

| ZenRows | Scraping without getting blocked | Rapidly growing | User-friendly and easy to implement | Fast | Positive | Extensive documentation |

| aiohttp | Making asynchronous requests | Large user base | Moderate | Fast | Positive | Extensive documentation |

| Selenium | Rendering dynamic content | Large user base | Requires additional setup | Resource-intensive | Positive | Extensive documentation |

| httpx | HTTP/2 support and asynchronous requests | Growing user base | Moderate | Moderate | Positive | Well-structured documentation |

| Urllib3 | High-level customization | Large user base | More code verbosity | Fast | Negative | Extensive documentation |

| Uplink | Declarative API | Small user base | Moderate | Moderate | Supports aiohttp and Twisted | Limited documentation |

| GRequests | Adding asynchronous capabilities to Requests | Rapidly growing user base | Easy to use | Fast | Provides asynchronous support using Gevent | Limited documentation |

Why Look for a Python Requests Alternative?

Although Requests sets a high standard for ease of use, there may be better solutions for some use cases. It has some limitations that prompt the need for an alternative. Let's discuss some of them below.

It Lacks Asynchronous Support

As emphasis on performance and scalability increases, the ability to handle multiple requests concurrently becomes critical. However, Python Requests lacks built-in support for asynchronous programming, and each request made blocks the execution of your program until the response is returned.

You can use techniques like the threading module or wrapping async/await syntax around the Requests library to make it async-friendly. But that only mimics asynchronous behavior and can increase code complexity. If you want accurate async requests, you must use a Python Requests alternative, like aiohttp.

Requests are Slower than Some Alternatives

While Requests is widely used, it might not be the fastest option, especially in scenarios where the need for performance outweighs its simplicity and abstraction. That said, its speed compared to alternative libraries can depend on various factors, including the specific use case and the nature of the request.

For example, when handling a large number of requests, alternatives like httpx and aiohttp outperform Python Requests due to their asynchronous capabilities.

It Misses Advanced Features

The Requests library prioritizes providing a user-friendly API for everyday use cases, making it easily accessible to anyone, including those new to the Python ecosystem. However, this simplicity also means it may have fewer advanced features than alternative HTTP libraries.

For example, Requests only sends HTTP/1.1 requests. So, it lacks support for the new HTTP protocol, HTTP/2, which offers better performance and addresses the drawbacks of the previous version. Similarly, customization support is limited in Python Requests. This can be detrimental in cases where configuring aspects of your requests and responses is essential.

Heavy with Dependencies

Python Requests is designed to be lightweight. Yet, it relies on some core dependencies (chardet, idna, certifi, and urllib3) that might be too heavy for some specific use cases. For instance, in a resource-constrained environment, you want to minimize dependencies as much as possible. So, you'd be better off with libraries that don't rely on any dependency.

Also, if your project is part of a large ecosystem, you want to ensure your HTTP library's dependencies are compatible with other tools in the stack.

You Get Easily Blocked when Web Scraping

With most websites implementing anti-bot mechanisms to regulate bot traffic, your Requests web scraper can easily get blocked. HTTP clients have unique fingerprints easily identifiable by web servers, who use this information to reject non-browser requests.

While some best practices or techniques aim to help you avoid detection, they almost always fail against advanced anti-bot protection. That said, you can integrate with alternatives like ZenRows to scrape without getting blocked. More on that later.

Requests Can't Render Dynamic Content

When a webpage relies on JavaScript to load or modify content dynamically, Python Requests cannot retrieve the fully rendered content of the page. Instead, it'll scrape only the static HTML and won't capture any subsequent dynamic update.

You must consider alternatives with headless browser functionality to render dynamic content, such as ZenRows and Selenium.

1. ZenRows: Web Scraping Master Key

If you're looking to scrape without getting blocked and at scale, ZenRows is the perfect Python Requests alternative. This data extraction tool provides everything you need to avoid detection, including rotating proxies, headless browser functionalities, CAPTCHA solving, and more.

Also, ZenRows is a rapidly growing tool, with developer adoption increasing by 27.4% monthly. Its support for multiple languages and versatility as an API, proxy, and SDK make this tool a valuable alternative.

👍 Advantages

- Headless browser functionality.

- Best anti-bot bypass features to scrape all web pages.

- Auto-rotating proxies.

- Easy to use and intuitive API.

- Extensive documentation and a rapidly growing developer community.

- No-code/low-code scraping with Zapier and Make integrations.

👎 Disadvantages

- Limited customization compared to other open-source alternatives.

👏 Testimonials

"... The thing I like the most is how easy to use ZenRows is…"

- Valeria S.

"I never had to worry about bot prevention systems… the ZenRows API takes care of that."

- Alper B.

"I've found slightly cheaper options, but much less sophisticated."

- Joseph N.

"... The best side is the reliability."

- Jose Ilberto F.

2. aiohttp: Peak Concurrency

Like Requests, aiohttp is a widely used Python HTTP library that supports authentication, custom headers, cookies, redirects, etc. However, they differ in critical areas. Firstly, aiohttp can handle asynchronous operations right off the bat. It's built on the asyncio library to drive support for async/await syntax. So, if you're familiar with asyncio, you can quickly get started with aiohttp.

Additionally, this tool offers an HTTP server, which enables the building of scalable web applications that can handle concurrent connections efficiently. Finally, aiohttp's popularity is evident in its active developer community, with over 14k stars and 2k forks on GitHub.

👍 Advantages

- Handles asynchronous requests.

- Supports both client and HTTP server.

- Provides native support for handling WebSocket connections.

- Intuitive client API.

- Offers hooks and middleware mechanisms for extending functionalities.

- Active developer community and comprehensive documentation.

👎 Disadvantages

- Lacks sync compatibility.

- No support for automatic JSON decoding.

- Steep learning curve for beginners.

👏 Testimonials

"... aiohttp is indeed neat. No WSGI (async-based). The API is clean and HTTP-level."

- Enz.

"Be careful with aiohttp, asyncio is great, but the actual http parser in aiohttp is super slow."

- Cshenton.

"I prototyped moving to aiohttp from requests/multiprocessing. The speedup was amazing. Saw something like a 60% reduction in runtime for our use case."

- Hermitdev.

▶️ Try aiohttp

3. Selenium: The Headless Browser Alternative.

Selenium is a popular open-source headless browser with 27k GitHub stars and 7.8k forks. While initially a browser automation tool, its ability to render JavaScript like an actual browser makes it a worthy Python Requests alternative, especially when scraping dynamic websites.

This tool also enables you to simulate natural user behavior and web page interactions, such as clicking buttons, scrolling, and mouse movements. It provides numerous web scraping features, including element identification, cookie management, and proxy compatibility.

However, Selenium is resource-intensive and can get slow, particularly when running multiple requests in parallel.

👍 Advantages

- Supports NodeJS, Python, Java, Ruby, Perl, R, Haskell, and Objective-C.

- Automates multiple browsers (Chrome, Safari, IE, Opera, Edge, and Firefox).

- Large user base and active developer community.

- Parallel tests execution.

- Extensive documentation and resources.

👎 Disadvantages

- Requires WebDriver configuration and setup for specific browsers.

- Selenium is resource-intensive and can get slow, especially for large-scale scraping.

👏 Testimonials

"Can handle almost all the scenarios we can think of for a website. I've worked for Walt Disney, and we used this tool primarily for testing multiple Disney websites (...)."

— Avinash M.

"It sometimes requires extensive configuration and tweaking, which can be a bit time-consuming."

— Ray S.

"Its versatility and compatibility with multiple programming languages is a huge advantage."

— Yacob B.

4. Httpx: The Modern Python HTTP Client

Httpx is another Python HTTP client library that supports asynchronous operations. But unlike aiohttp, this tool is designed to provide a unified synchronous and asynchronous API focusing on user-friendliness.

Other features also make httpx stand out as a Python Requests alternative. For example, it provides built-in support for HTTP/2 connections, a newer version of the HTTP protocol that provides better performance and addresses the drawbacks of the previous version (HTTP/1.1).

It also supports streaming response, which allows you to handle large datasets or files without loading the entire content into memory at once. Lastly, httpx is a popular library with an active developer community and 6.4k GitHub stars.

👍 Advantages

- Asynchronous support

- Sync compatibility

- Provides built-in support for WebSocket communication.

- Automatic JSON decoding.

- HTTP/2 and HTTP/1.1 support.

- Fully type annotated.

👎 Disadvantages

- Larger size compared to other HTTP client libraries.

- The community's less extensive than more established libraries.

👏 Testimonials

"I've been using httpx in production now for a couple of months. I switched from Requests when I realized I needed async support and it has been a dream to use."

— Daze.

"Fully type annotated. This is a huge win compared to Requests."

— Yegle.

"httpx makes a pretty good first impression on me. However, I am missing one thing: The features this offers over the popular Requests library do not seem to require httpx to be a competitor, but an extension or fork."

— Felk.

"The huge win against requests is that httpx is fully async. You can download 20 files in parallel without too much effort. "

— Jordic.

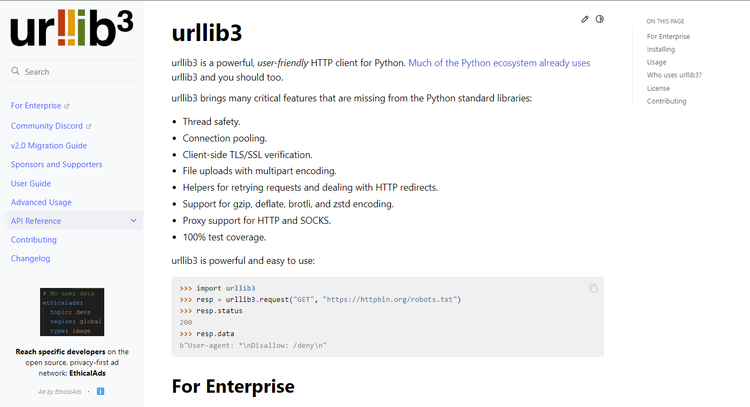

5. urllib3: Highly Customizable HTTP Client.

As mentioned earlier, urllib3 is a dependency for the Requests library. But it also works as a standalone Python HTTP library.

You're probably wondering, Since Requests is built on Urllib3, don't they share the same functionalities?

To some extent, they do. However, Requests abstracts urllib3 functionalities to create an intuitive API. On the other hand, urllib3 offers low-level control and full customization support, which allows you to configure almost every aspect of your requests.

For example, urllib3 allows complete customization of the `ConnectionPool` instances. This can include custom timeouts, retries, and maximum connection limits. This level of control enables you to tailor the behavior of HTTP connections to meet specific requirements.

👍 Advantages

- Highly customizable.

- Connection pooling.

- Thread safety.

- SSL/TLS certificate verification.

- Automatic content decompression.

- Helpers for flexible retry mechanisms.

- Large developer community.

- Well-structured documentation.

👎 Disadvantages

- Lacks native JSON support.

- More code verbosity.

👏 Testimonials

"Urllib3 has let me pull data in an automated manner in ways I previously could only have done by hand one by one. It's heavily sped up my data analysis workflow."

— Mike A.

"We use urllib3 to Helpers for retrying requests and dealing with HTTP redirects."

— Varsha P.

"Advanced features are not easy to use like client side authentication, setting client certificates."

— James G.

"My favorite feature is that urllib3 has helpers for managing redirections at the target url."

— Nicodemus N.

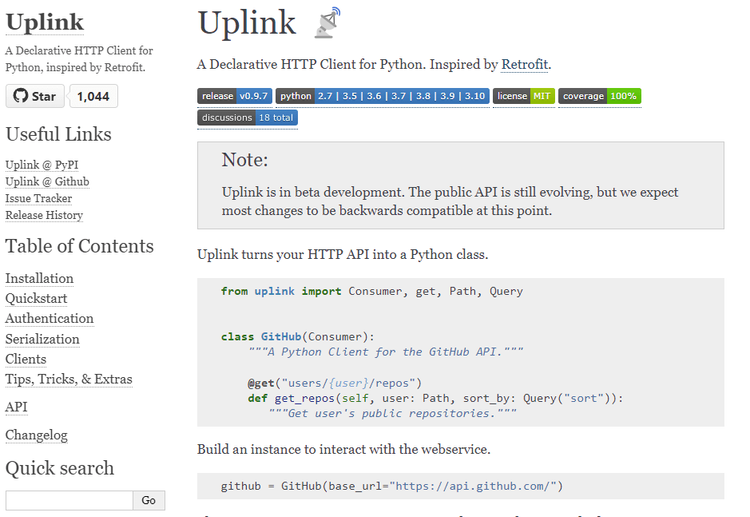

6. Uplink: Declarative Python HTTP Client

Uplink is an open-source Python HTTP client. While it is still in beta development, it promises backward compatibility for most updates. This tool adopts a declarative approach, which allows you to define API interactions using Python classes and methods. This makes the code more readable and closely aligned with the API structure.

For non-blocking (asynchronous) requests, Uplink offers support for aiohttp and Twisted, which enables you to make multiple requests concurrently using Python's asyncio framework. While this tool is relatively new, it has an active developer community and boasts over 1k GitHub stars.

👍 Advantages

- Non-blocking support for aiohttp and Twisted.

- Automatic serialization and deserialization.

- Can integrate with external plugins to extend functionalities.

- Built-in support for basic authentication.

- Supports dynamic path and query parameters.

👎 Disadvantages

- Limited community and ecosystem.

- Not as feature-rich as other HTTP clients.

👏 Testimonials

"...We want a client-side API for our apps. Uplink is the quickest and simplest way to build just that client-side API. Highly recommended."

— Michael Kennedy.

"...The limited community support became evident as I struggled to find solutions to my specific problems. "

— Sam Y.

"Uplink’s intelligent usage of decorators and typing leverages the most pythonic features in an elegant and dynamic way."

— Or Carmi.

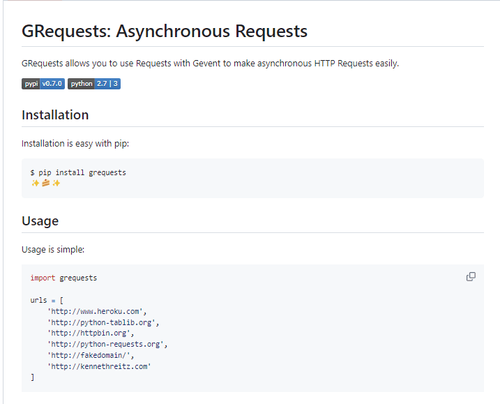

7. GRequests: Asynchronizing Python Requests.

GRequests is a Python library that extends the capabilities of the popular requests library by adding support for asynchronous HTTP requests using the `Gevent` library. It allows you to make multiple HTTP requests concurrently in a non-blocking manner, which can be particularly useful for scenarios where performance is critical.

The syntax and structure of GRequests is similar to that of the Requests library. Therefore, you can easily transition to asynchronous requests with minimal code changes. Also, since its release in 2019, GRequests has been experiencing rapid growth. It boasts over 4k stars and 300+ forks on GitHub.

👍 Advantages

- Provides support for asynchronous requests using Gevent.

- Requests API compatibility

- Familiar syntax.

- Easy to use

- High performance.

👎 Disadvantages

- Relies on additional dependency for asynchronous support.

- Code could become challenging to maintain due to nested callbacks.

👏 Testimonials

"GRequests has significantly enhanced the performance of our project by providing a seamless way to handle asynchronous HTTP requests."

— Godfrey.

"The learning resources for GRequests, especially in comparison to more established libraries, could be more extensive. "

— Selma B.

"The documentation is thorough and well-organized. So it was easy to get started."

— Gabby.

Conclusion

Although Requests is Python's most popular HTTP library, there may be better choices for most use cases. We've explored seven powerful options, with ZenRows leading the way as the best web-scraping Python Requests alternative. This web scraping API offers headless browser functionalities and everything you need to scrape at scale without getting blocked.