If you've ever tried to scrape a website protected by Cloudflare's anti-bot, you know the frustration of being blocked or slowed down by it. But don't be afraid anymore because cfscrape is here to save the day!

In this cfscrape tutorial, we'll explore the magic of this Python module that allows you to bypass Cloudflare protection and scrape websites: from setting it up in Python to practical scenarios and common errors to watch out for.

So grab your Python skills, and let's dive into the world of web scraping without the hassle of anti-bot measures.

What Is cfscrape?

Simply put, cfscrape is a Python module that allows users to bypass Cloudflare's anti-bot protection system when web scraping.

When a user accesses a website that has implemented Cloudflare protection, the user's browser is presented with a JavaScript challenge, which contains a series of tasks that must be completed in order to access the website. These tasks can include solving a CAPTCHA, completing a JavaScript puzzle or performing some calculations.

The purpose is to distinguish human users and bots to prevent the latter from accessing the website and potentially causing harm, such as launching a DDoS attack. However, this can also be a problem for users trying to access the website for legitimate purposes, such as web scraping. This is where cfscrape comes into play.

The Python library developed by a community on GitHub usually succeeds in bypassing the challenges by emulating a web browser, thereby convincing the website that the request is coming from an actual user rather than a scraper.

Now that we've got a basic understanding of what cfscrape is and how it works, let's dive into how to set it up and use it in Python.

How Do You Use cfscrape?

Let's say you're trying to scrape the Glassdoor website, protected by Cloudflare. You try using the standard requests library to access the website with a simple scraper:

import requests

scraper = requests.get('https://www.glassdoor.com')

print(scraper.text)

"However, instead of extracting the desired data, you suddenly see a 403 Error. Generally, such a response results from the website's protection measures marking you as a bot and blocking your attempts to connect.

<!doctype html><html lang="en"><head><title>HTTP Status 403 Forbidden</title>…

Let's see how to fix that by making the most of cfscrape right now.

How Do I Use cfscrape in Python?

Follow the next steps to use cfscrape in Python in order to scrape a Cloudflare-protected website.

Step 1: Install cfscrape

First, install cfscrape by running the following command in your terminal:

pip install cfscrape

Step 2: Code your scraper

Once you've installed the module, use it in your Python code by importing it, then call the create_scraper() function to create a scraper object. Now, use the object to access the website protected by Cloudflare by calling its get() method and passing in the URL of the website as an argument:

import cfscrape

scraper = cfscrape.create_scraper()

response = scraper.get('https://www.glassdoor.com/about')

print(response.text)

with open('./file.html', '+w') as file:

file.write(response.text)

The response object returned by the get() method will contain the HTML of the website, which you can then parse or scrape as you'd with any other HTML content.

That's it! With just a few lines of code you can bypass Cloudflare protection using Python and cfscrape, and succesfully scrape any website.

Step 3: Combining cfscrape with Other Libraries

Hold on, there's more! One of the great things about cfscrape is that it can be combined with other Python libraries. For example, you can use cfscrape to bypass Cloudflare protection and then use a library like BeautifulSoup to parse and extract data from the HTML content.

In the example above, we used cfscrape to send a get() request to Glassdoor and retrieve the HTML content. Then, the response object is passed to BeautifulSoup, which parses the HTML and extracts specific data elements, such as the URLs of images displayed on the page.

import cfscrape

from bs4 import BeautifulSoup

scraper = cfscrape.create_scraper()

response = scraper.get('https://www.glassdoor.com/about')

soup = BeautifulSoup(response.text, 'html.parser')

# To return src attribute of all images on the page

for img in soup.find_all('img'):

print(img.get('src'))

Here's our output:

https://about-us.glassdoor.com/app/uploads/sites/2/2022/10/2022_about-us-hero_x.svg

https://about-us.glassdoor.com/app/uploads/sites/2/2022/10/2022_about-us-for-job-seekers_x-1.svg

https://about-us.glassdoor.com/app/uploads/sites/2/2022/10/2022_about-us-for-employees_x-1.svg

…

As you can see, by combining cfscrape with other Python modules, you can build powerful web scrapers.

However, you might need to deal with errors sometimes, so let's learn about which ones!

Troubleshooting Common Errors in cfscrape

You may encounter several common errors when using cfscrape:

-

ConnectionErrorlets us know of a problem connecting to the website. This can happen if the website is down or if there's a problem with your internet connection. -

CloudflareCaptchaErrorindicates Cloudflare has detected that the request is being made by a bot and has presented a CAPTCHA challenge. In this case, you'll need to solve the CAPTCHA manually or try accessing it again. -

CloudflareChallengeErroris returned when cfscrape is unable to automatically solve the Cloudflare challenge. This can happen if the challenge presented has changed or if there's a bug in cfscrape. In this case, you'll need to update cfscrape or try a different library for bypassing Cloudflare's protection, like ZenRows.

To handle them, use try and except statements to catch any errors that may occur when using cfscrape. If an error is caught, the corresponding except block will be executed, and you can handle the error as needed. If no errors occur, the else block will be executed, and you can process the response as required.

import cfscrape

try:

# Create a scraper object

scraper = cfscrape.create_scraper()

# Use the scraper object to access the website

response = scraper.get(your_url)

except cfscrape.ConnectionError:

# Handle connection error

except cfscrape.CloudflareCaptchaError:

# Handle captcha error

except cfscrape.CloudflareChallengeError:

# Handle challenge error

else:

# Process the response as needed

…

Limitations of cfscrape and Alternatives

Moreover, although cfscrape is a valuable tool for bypassing anti-bot protection, it's important to note that it may not be enough to bypass the latest security measures implemented by Cloudflare as it has NOT been updated in recent years.

Just take a look at this example:

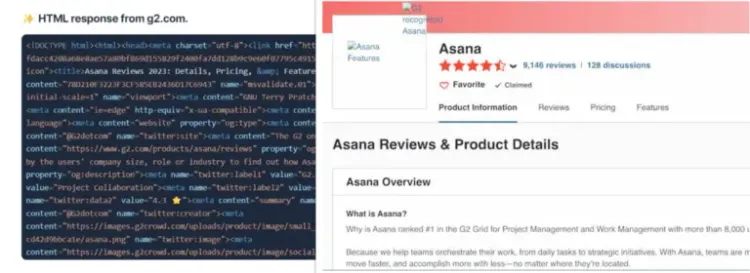

When attempting to access the Asana page on G2.com using cfscrape, the anti-bot service detects an automated browser session and blocks the attempt, resulting in an Access denied message.

<!DOCTYPE html>

<html lang="en-US">

<head>

<title>Access denied</title>

As an alternative, ZenRows is a more reliable tool for bypassing Cloudflare's protection because it has a higher success rate and is updated very often.

To scrape the G2 web page like a boss, sign up to get your free API key in seconds and try the following:

# pip install zenrows

from zenrows import ZenRowsClient

client = ZenRowsClient("YOUR_API_KEY")

url = "https://www.g2.com/products/asana/reviews"

# Enable JavaScript rendering, Antibot and Premium proxy features

params = {

"js_render": "true",

"antibot": "true",

"premium_proxy": "true"

}

response = client.get(url, params=params)

print(response.text)

The URL is passed to the client.get() method and Cloudflare's protection is smoothly bypassed thanks to ZenRows' anti-bot and premium proxy capabilities. No more frustrating blocks or errors.

Also, just like done with cfscrape, you can combine ZenRows with other libraries.

Conclusion

This tutorial has covered the basics of using cfscrape, a Python module for bypassing Cloudflare's anti-bot protection measures when doing web scraping. Also, we discussed some common errors you might encounter when using cfscrape and how to handle them.

Overall, while cfscrape is a useful tool for bypassing Cloudflare's protection, ZenRows is a much better option due to its ease of use, comprehensive feature set, and reliability. If you're looking for a tool to help you access protected websites, we highly recommend giving ZenRows a try.

Did you find the content helpful? Spread the word and share it on Twitter, or LinkedIn.