You might haven't heard about browser fingerprinting, but it's a popular process that might get you blocked while web scraping.

Nowadays, most web servers gather information about your device to form a unique profile or fingerprint. So when you visit web pages, they can identify you and serve content accordingly. We'll learn how they work to extract the data you care about.

Let's get started!

What Is Browser Fingerprinting?

Browser fingerprinting is a process of identifying web clients by collecting specific data points from devices, HTTP connections, and software features.

Think of it this way: a real fingerprint image is a unique identifier that looks like a combination of lines and curves, right? Now, in the digital world, each line and curve encodes information from your end, including:

- Operating system type and language.

- Browser type, version, and extensions.

- Time zone.

- Language and fonts.

- Battery level.

- Keyboard layout.

- User-agent.

- CPU class.

- Navigator properties.

- Screen resolution.

- Other.

Although the details above are generic, it's extremely rare for two users to have a hundred percent matching data points. Each one generates a unique fingerprint, as even a slight variation results in a different outcome.

For example, hundreds of millions of users visit Google using Chrome. But how many use Chrome with a single extension in a 1366x768 resolution on an HP OMEN PC with 16 GB of RAM and 4GB Nvidia graphics on driver version 25.20.99.9221? Not many.

What Is the Purpose of Browser Fingerprinting?

Although this guide focuses on browser fingerprinting regarding web scraping, websites identify and track users for numerous reasons.

For example, browser fingerprinting protection finds wide adoption in cyber security due to the exponential increase in cyber attacks. Namely, systems develop profiles with natural user behavior based on specific parameters in clients' fingerprints to isolate bots and threats. Additionally, websites trying to protect user accounts track fingerprint details to notify the owners of suspicious login attempts.

Another common use is to improve user experience because websites can provide personalized content by identifying location-specific details, such as language and time zone.

Advertising networks also leverage fingerprint details to offer product and service recommendations to their target audiences. For example, if they know your PC specifications, such as GPU and screen resolution, you may get ads for new PC models.

Browser Fingerprinting vs. Cookie Tracking

Note that browser fingerprinting is different from cookie tracking. While the latter is the conventional solution for identifying and tracking web users, privacy laws are slowly taking cookies out of the game. But how exactly do they differ?

Cookies are small data packets sent to a user's browser by a web server, then the browser saves and uses them the next time the user makes a request to the server for the same site to self-identify.

End-users have some control over this type of tracking as websites often ask them to accept it. So, if you delete or reject these cookies, the website can no longer recognize or track your activities. On the contrary, you have no say in browser fingerprinting identification. Once a browser sends a request to the web server, your data is collected and grouped into a unique profile.

Let's see how websites access the data they need to generate a fingerprint in more detail.

How Does Browser Fingerprinting Work?

When you visit a page, your web client sends a request to the site's server along with bits of data required to establish a connection. That would typically include your IP address and browser properties, but that's not everything that can be sent, as seen earlier.

Some of the data reach the web server with the first packet of the connection. But, in most cases, the website injects JavaScript to generate the necessary fingerprint information, depending on the technique. These scripts are often injected as documents that run in the background and are difficult to distinguish.

Because each web client has different values for the data points these scripts query, websites create unique fingerprints, identifying each user. For instance, web scrapers generate bot-like fingerprints, leading to Access denied or an error 403 during web scraping.

Before we discuss the various fingerprinting techniques, let's analyze real-life examples to understand better how they work.

Browser Fingerprinting Examples

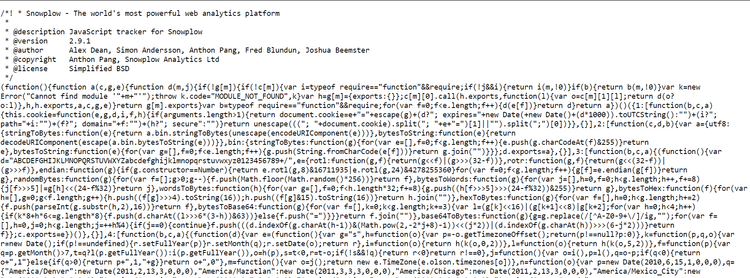

As a first example, we'll review a JavaScript file from Keywee, a platform that uses natural language processing and machine learning to help publishers and marketers create, distribute, and measure the performance of their content.

This script you see above is minified, so it's difficult to deduce what it does. However, we can use an online prettifier on the code to better understand it. Here's the result.

Starting from line 1415, we can see functions for collecting data points.

17: [function(b, c, a) {

(function() {

var l = b("../lib_managed/lodash"),

k = b("murmurhash").v3,

g = b("jstimezonedetect").jstz.determine(),

e = b("browser-cookie-lite"),

h = typeof a !== "undefined" ? a : this,

j = window,

d = navigator,

i = screen,

f = document;

h.hasSessionStorage = function() {

try {

return !!j.sessionStorage

} catch (m) {

return true

}

};

h.hasLocalStorage = function() {

try {

return !!j.localStorage

} catch (m) {

return true

}

};

h.localStorageAccessible = function() {

var m = "modernizr";

if (!h.hasLocalStorage()) {

return false

}

try {

j.localStorage.setItem(m, m);

j.localStorage.removeItem(m);

return true

} catch (n) {

return false

}

};

h.hasCookies = function(m) {

var n = m || "testcookie";

if (l.isUndefined(d.cookieEnabled)) {

e.cookie(n, "1");

return e.cookie(n) === "1" ? "1" : "0"

}

return d.cookieEnabled ? "1" : "0"

On line 1461, the code snippet gathers several fingerprint components in variable p, as shown below.

h.detectSignature = function(r) {

var p = [d.userAgent, [i.height, i.width, i.colorDepth].join("x"), (new Date()).getTimezoneOffset(), h.hasSessionStorage(), h.hasLocalStorage()];

var m = [];

if (d.plugins) {

for (var q = 0; q < d.plugins.length; q++) {

if (d.plugins[q]) {

var n = [];

for (var o = 0; o < d.plugins[q].length; o++) {

n.push([d.plugins[q][o].type, d.plugins[q][o].suffixes])

}

m.push([d.plugins[q].name + "::" + d.plugins[q].description, n.join("~")])

}

}

}

return k(p.join("###") + "###" + m.sort().join(";"), r)

};

In this case, the collected data includes:

- User-agent string.

- Window size.

- Color.

- Time zone.

- Local storage.

Then, on line 1475, function k returns an integer hash code of the fingerprint components.

Like the example above, most websites call JavaScript files to identify and isolate web clients.

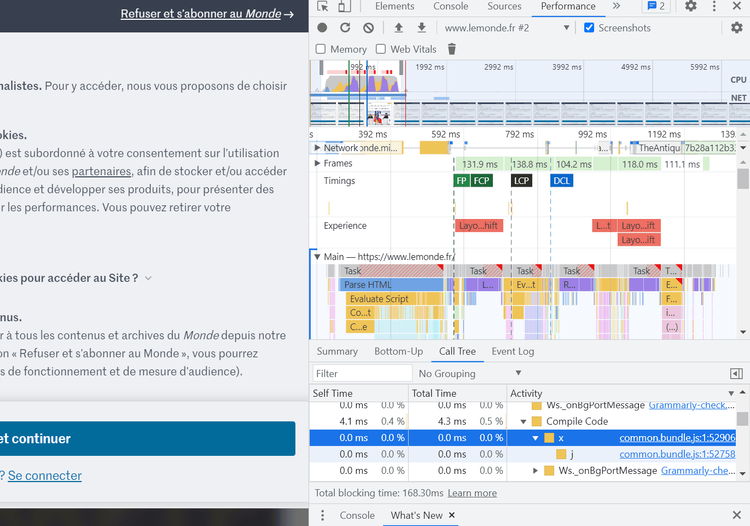

Now, let's try to locate the browser fingerprinting script on Le Monde, a French news website.

If we inspect the performance analyzer in the developer tools and navigate to the call tree, we can locate the first function call.

Open this script in a new tab by clicking on the highlighted link in the image above.

Note that browser fingerprinting can be dependent on location or IP address. In other words, it can be turned on only for a set of IP addresses, so this script might be different for you.

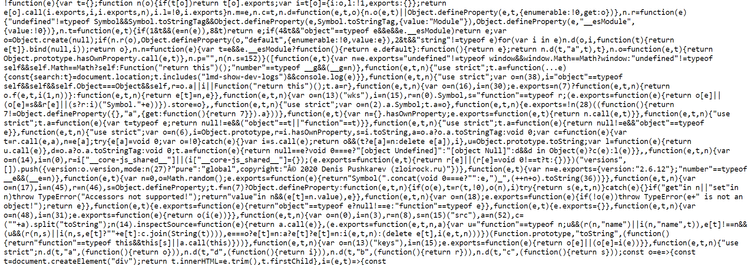

That said, let's beautify this script to get a better understanding of what it does. You can find the complete script here.

On lines 1038 and 1042, we see data points stored in variables e and p. They include:

- User-agent string.

- Browser vendor.

- Window width and height.

f = () => {

const e = navigator.userAgent || navigator.vendor || window.opera;

return -1 !== e.indexOf("FBAN") || -1 !== e.indexOf("FBAV")

},

p = () => window.innerWidth || document.documentElement.clientWidth || document.body.clientWidth;

let m = !1;

const h = () => {

const e = sessionStorage.getItem("pageinsession");

if (!0 === m && e) return e.length;

if (m = !0, "reload" !== d()) {

const t = e && "".concat(e, "i") || "i";

return sessionStorage.setItem("pageinsession", t), t.length

}

return e ? e.length : 1

},

Following the same principle, other functions may collect additional fingerprint data in this script.

Fingerprinting Techniques

Sites use multiple methods to interact with web clients' properties in order to access and identify user data. Let's look at some of them!

Canvas Fingerprinting

The HTML5 Canvas is an API with built-in objects, methods, and properties for drawing texts and graphics on a canvas. Browsers can leverage its features to render website content.

In 2012, Mowery and Shacham found that the final user-visible canvas graphic is directly affected by several factors, including:

- Operating system.

- Browser version.

- Graphics card.

- Installed fonts.

- Sub-pixel hinting.

- Antialiasing.

That means the produced images and text differ depending on the device's graphical capabilities. As a result, browser fingerprinting websites request web clients to process canvas images. That generates valuable information that sites collect and store in their browser fingerprint database.

To learn more about how websites use HTML5 Canvas features to block web scrapers, check out our in-depth article on canvas fingerprinting (to be published soon).

WebGL

Like HTML5 Canvas, WebGL is a graphics API specifically for rendering 3D interactive images.

Yes, you guessed it! These graphics are rendered differently per the device's graphical capabilities. So, browser fingerprinting websites can task a browser's WebGL API to produce 3D images to extract a device's features from the result.

AudioContext

The Web Audio API is an interface for processing audio. Any device can generate audio signals and apply certain compression levels and filters by linking audio modules together to get a specific result.

AudioContext fingerprinting works the same way as HTML5 Canvas and WebGL. It processes audio signals present differences based on the device audio stack (software and hardware features).

That is a relatively novel method for collecting fingerprinting data, so only a few websites have scripts that implement the Web Audio API.

Browser Extensions

Browser extensions are small software modules that extend the functionalities and features of a browser. Some of the most popular ones are ad blockers and VPNs.

That said, the most important element for browser-fingerprinting websites is how the extensions are integrated. Typically, they get some of their resources via the web. So, by checking for specific URLs, websites can query the presence or absence of an extension. For example, to get the logo of an extension, the site can use its knowledge of the device and follow a URL of the form extension://<extensionID>/<pathToFile> to retrieve it.

However, not every extension has such accessible resources, and not all are detectable using this technique.

JavaScript Standards Conformance

Websites can also identify users based on their underlying JavaScript engine. That's possible because these engines execute JavaScript code differently depending on the browser features. Even subsequent browser versions present differences. Therefore, websites can test web clients to see if they compile according to the JavaScript standard. Then they analyze the supported features to extract fingerprinting data.

CSS properties

Web browsers have different default values for specific CSS properties. By reading these properties' values, a website determines which browser is used to access the site. For example, most Firefox CSS properties contain the -Moz- prefix, while Safari and Chrome use the -WebKit- prefix.

Fonts

One unpopular but effective browser fingerprinting technique is identifying users based on rendered texts because browsers on different devices render the same font style and character with different bounding boxes. Consequently, sites can query bounding boxes to collect fingerprinting data.

Transport Layer Security (TLS)

When web clients send HTTPS requests to a website, they do so over Transport Layer Security. The web client must create a secure connection to enable communication, which involves exchanging valuable data with the web server via the TLS handshake. Websites use the information in the exchange to identify users, as these parameters differ per web client.

We covered TLS fingerprinting in detail, so let's focus on how to avoid browser fingerprinting this time.

How to Bypass Browser Fingerprinting?

Ideally, you can avoid browser fingerprinting by disabling JavaScript. That would work since websites use scripts to collect the data to create your fingerprint but, while some anti-browser-fingerprinting solutions use this approach, it's not reliable. That is because it impacts usability and content availability, as most websites today rely on JavaScript to display content.

Furthermore, there's a difference between avoiding browser fingerprinting as an actual user and a web scraper.

For a regular surfer, the goal is to maintain privacy and mitigate unwanted tracking. For this, browsers adapt their technology to protect users from browser fingerprinting. For example, Chrome proposes to minimize the identifying information shared in a user-agent string.

On the other hand, web scrapers require access to extract data. So, our goal is to blend in at scale, and most web scraping resources will advise you to mimic real user behavior. But how to achieve that without being identified as a bot?

Using headless browsers like Selenium, Playwright, and Puppeteer makes your request seem more natural than an HTTP client. But are they enough?

JavaScript identifies web scrapers using two main concepts. The first one is bot fingerprint leaking, which uses the JavaScript environment to determine whether a web client is an actual browser or a bot. The second is identity fingerprinting, in which the JavaScript environment tracks clients using a unique ID. For example, if your web scraper is tracked with ID 45689, it can be identified after making too many unnatural connections.

Bot fingerprint leaking is the most common way websites identify and block headless browsers. While they can imitate real user behavior, some default properties may flag them as scrapers. Let's see how and what's the solution!

Headless Browser Leaks

Sadly, browser fingerprinting alone is quite powerful for detecting web scrapers, and headless browsers can make fingerprint identification easier. That's because they set default properties that flag them as bots in the JavaScript execution context. In other words, JavaScript can identify these properties and inform the web server that a bot controls the browser.

Therefore, making our scraping environment undetectable should be our first step to bypassing browser fingerprinting.

One of those snitching properties is headless: true, indicating that the browser operates without GUI elements. Another property leak is navigator.webdriver: true, set that way by Selenium, Playwright, and Puppeteer.

By detecting these property-value pairs, websites identify and block web scrapers powered by headless browsers Selenium and Playwright. So, how do we make these properties undetectable?

How to Plug Fingerprint Leaks?

We can plug leaks by imposing fake values whenever the property is accessed. For example, the following script plugs the navigator.webdriver leak.

Object.defineProperty(navigator, "webdriver", {

get: function() {

return false;

}

});

It uses the Object.defineProperty() method to create or modify the property on the navigator object, and the GET function is used to define the behavior when the navigator.webdriver property is queried. In this case, the function always returns the value false.

You can add this code to the beginning of your web scraping script. That way, when the website checks the value of navigator.webdriver, it'll always return false, and it'll not be able to detect that the browser is automated.

Let's see how to do that for the most popular headless browsers:

Selenium: The execute_script() method will execute the script. Here's an example of how to do it in Python.

from selenium import webdriver

# create a new instance of the chrome driver

driver = webdriver.Chrome()

# execute the script to hide the fact that the browser is automated

driver.execute_script("Object.defineProperty(navigator, 'webdriver', {get: function() {return false}})")

Playwright: You can use the evaluate() method to execute the script in JavaScript. Check the following example.

const { chromium } = require('playwright');

(async () => {

const browser = await chromium.launch();

const context = await browser.newContext();

const page = await context.newPage();

// execute the script to hide the fact that the browser is automated

await page.evaluate(() => {

Object.defineProperty(navigator, 'webdriver', {get: () => false});

});

})();

Puppeteer: You may also use the evaluate() method to execute the script in JavaScript, as shown below.

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch();

const page = await browser.newPage();

// execute the script to hide the fact that the browser is automated

await page.evaluate(() => {

Object.defineProperty(navigator, 'webdriver', {get: () => false});

});

})();

However, every browser has leak vectors that we must plug individually. Unfortunately, we can't use the same plugging techniques across the board. Instead, we need to tackle each one separately.

Chrome is arguably the most used browser, so we'll focus on it to blend in as much as possible. However, you can use any browser once you learn how to plug these leaks and make your scraping environment undetectable.

Chrome Leaks

There are numerous solutions for analyzing browser leaks and fingerprint values. But they're mostly open-source and rarely keep up with the constantly evolving browser ecosystem. Yet, we'll create a quick script that compares our headless browsers against actual browsers.

We'll use a Python script to compare Playwright and Selenium browsers for this example.

import sys

from checkselenium import run as run_selenium

from checkplaywright import run as run_playwright

# run function to execute the script

def run(script:str, url=None):

# data variable to store the result of the script execution

data = {}

# loop through the toolkits, selenium and playwright

for toolkit, tookit_script in [('selenium', run_selenium), ('playwright', run_playwright)]:

# loop through the browser, chromium

for browser in ['chromium']:

# loop through headless and headful mode

for head in ['headless', 'headful']:

# store the result of the script execution in the data variable

# using the toolkit, head, browser and url as the key

data[f'{toolkit:<10}:{head:<8}:{browser:<8}:{url or ""}'.strip(':')] = tookit_script(browser, head, script, url)

# return the data variable

return data

# main function

if __name__ == "__main__":

# loop through the result of the run function

for query, result in run(*sys.argv[1:]).items():

# print the query and result

print(f"{query}: {result}")

BoldLet's test our script using the navigator.webdriver property.

$ python compare.py "navigator.webdriver"

selenium :headless:chromium: True

selenium :headful :chromium: True

playwright:headless:chromium: True

playwright:headful :chromium: True

As you can see, our script returns true as a value for both Selenium and Playwright.

One of the common ways websites identify web clients is by testing capabilities. The widely used data points for this test are plugins and MIME types. Websites access these values using the navigator.plugins and navigator.mimetypes methods.

Let's see the values Selenium and Playwright provide.

$ python compare.py "[navigator.plugins.length, navigator.mimeTypes.length]"

selenium :headless:chromium: [0, 0]

selenium :headful :chromium: [5, 2]

playwright:headless:chromium: [0, 0]

playwright:headful :chromium: [5, 2]

Our results are empty arrays because headless browsers don't support visual details. Mimicking user behavior for this scenario can get broad, but this GitHub repository explains how you can correctly mimic these features.

Launch Flags

It's also worth noting that automated browsers add extra launch flags that can easily be detected in a JavaScript environment. We can identify these flags by enabling debug logs in our headless browsers.

For Selenium, you can enable debug logs by adding the following line of code.

import logging

from selenium import webdriver

from selenium.webdriver.remote.remote_connection import LOGGER

logging.basicConfig()

LOGGER.setLevel(logging.DEBUG)

driver = webdriver.Chrome()

#... Scraping Logic

That will print all the browser commands sent to the browser during the execution of the Selenium script, producing results like these.

DEBUG:selenium.webdriver.remote.remote_connection:POST http://127.0.0.1:56426/session {"capabilities": {"firstMatch": [{}]}, "desiredCapabilities": {"browserName": "chromium", "version": "", "platform": "ANY"}}

We can view the default arguments directly by printing them in the console for Puppeteer.

const puppeteer = require('puppeteer')

console.log(puppeteer.defaultArgs());

That should get you the following results.

[ '--disable-background-networking', '--disable-background-timer-throttling', '--disable-backgrounding-occluded-windows', '--disable-breakpad', '--disable-client-side-phishing-detection', '--disable-component-extensions-with-background-pages', '--disable-default-apps', '--disable-dev-shm-usage', '--disable-extensions', '--disable-features=TranslateUI,BlinkGenPropertyTrees', '--disable-hang-monitor', '--disable-ipc-flooding-protection', '--disable-popup-blocking', '--disable-prompt-on-repost', '--disable-renderer-backgrounding', '--disable-setuid-sandbox', '--disable-speech-api', '--disable-sync', '--disable-tab-for-desktop-share', '--disable-translate', '--disable-web-security', '--disable-xss-auditor', '--enable-automation', '--enable-features=NetworkService,NetworkServiceInProcess', '--force-color-profile=srgb', '--metrics-recording-only', '--no-first-run', '--no-pings', '--no-sandbox', '--no-zygote', '--remote-debugging-port=0', '--remote-debugging-address=0.0.0.0', '--single-process' ]

Some of these flags can disable certain features or security measures, so we need to remove them to mimic real user behavior.

Here are some that could be removed:

-

--disable-web-security: This flag disables the same-origin policy that prevents a web page from making requests to a different domain than the one that served the web page. -

--disable-xss-auditor: That disables the cross-site scripting (XSS) auditor, a feature that detects and blocks malicious scripts trying to inject code into a web page. -

--no-sandbox: This flag disables the security sandbox Chromium uses to isolate the browser's renderer process from the rest of the system. -

--enable-automation: That enables browser automation, which can be used to perform tasks such as automated testing. Consequently, if a website detects that, it'll flag your browser as a bot.

Remark: disabling these flags can make your browser more vulnerable to certain attacks, so you should only use them in a controlled environment.

The following script will disable the flags mentioned above. Here's an example using Selenium:

from selenium import webdriver

options = webdriver.ChromeOptions()

options.add_argument("--disable-web-security")

options.add_argument("--disable-xss-auditor")

options.add_argument("--no-sandbox")

browser = webdriver.Chrome(options=options)

You can do the same using Puppeteer.

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch({

args: ['--disable-extensions', '--enable-automation=false']

});

//...

})();

Headless Browsers in Stealth Mode

There are other JavaScript-detectable features you might need to mimic when scraping using headless browsers. Luckily, the stealth version of these headless browsers can help with some of them. Plus, they're easy to use. You simply import the stealth version of the headless browser library and scrape.

Here are some examples:

Unfortunately, they might only work in some cases. As mentioned earlier, open-source code tends to lag behind on many occasions, while browsers and anti-bot detection solutions frequently update their defenses.

Anyways, let's see a solution that makes bypassing browser fingerprinting easy next.

Bypassing Browser Fingerprinting Using ZenRows

ZenRows is a powerful web scraping API with an easy-to-use interface and amazing support. It can scrape data from virtually any website regardless of its browser fingerprinting prowess, and you get your API key for free.

Just sign up for free, and you'll get to the Request Builder page. Paste your target URL, activate the Antibot and Premium Proxy features, and click on the Try it button.

Conclusion

Phew! It's been quite the ride.

Browser fingerprinting is a powerful anti-bot detection technique. And, since scraping such websites is no mean feat, we provided different options.

Let's do a quick recap! We learned that you could mimic user behavior by:

- Using headless browsers.

- Plugging leaks.

- Removing harmful launch flags.

To spare yourself time and effort and avoid dealing with anti-bot system updates, ZenRows makes everything easier. Try it out today with 1,000 free successful requests.

Did you find the content helpful? Spread the word and share it on Twitter, or LinkedIn.